Mobile marketers are stuck between a rock and a hard place. On one hand, they’re in charge of optimizing their storefronts in the Apple App Store and Google Play Store, faced with the challenging goal of driving measurable results. On the other hand, they must deal with limited tools, limited data, and constantly changing rules (like dynamic app store algorithms) as they attempt to achieve their business goals.

That said, successful mobile marketers don’t give up and accept the current landscape as is. They leverage what they can to drive growth for their apps and games and help their companies navigate the stormy app store waters. But many aren’t so lucky. They may understand that app store optimization (ASO) testing should be a critical component of their mobile marketing strategy, but they’re not uncovering actionable insights or results when doing so.

After analyzing thousands of Google Experiments and app store creative tests, we discovered what makes some companies successful in their app store testing efforts and others not. To ensure you maximize the benefits from your tests, we compiled our findings into a list of the 7 deadly app store testing sins to avoid at all costs.

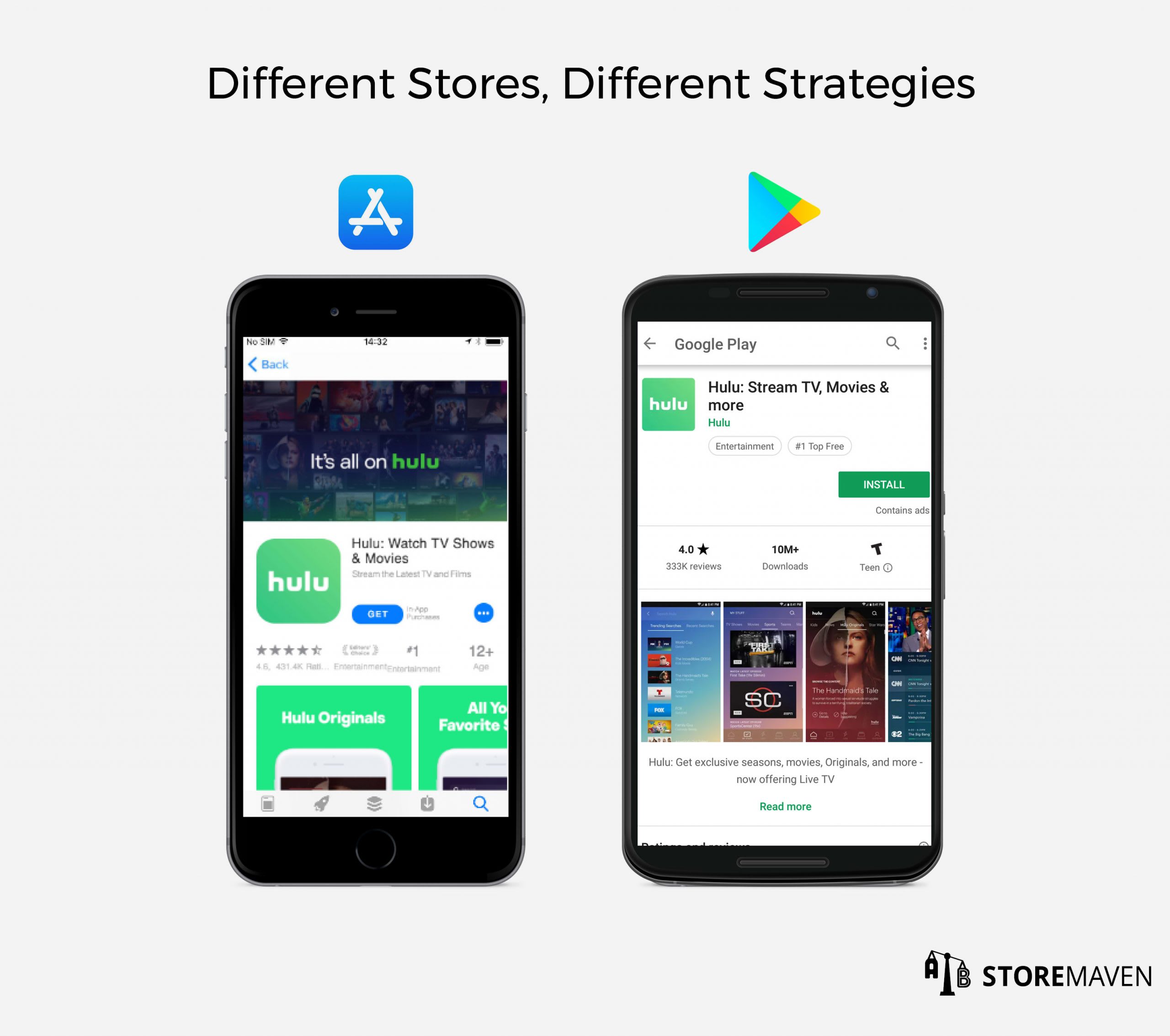

Sin #1- Testing on Google Experiments and Implementing Results on the Apple App Store

In almost every situation, testing on Google Experiments and using the results on the Apple App Store can actually harm the conversion rates (CVR) on iOS. In fact, we’ve found that implementing the same creatives on both platforms can lead to a 20-30% decrease in installs on the Apple App Store.

This is because the two platforms are different in many ways:

- Android users are simply different than iPhone users—the two audiences have distinct behaviors and preferences

- Despite the Google Play redesign, the layouts are still different (e.g., no autoplay on Google Play, different image resolutions, etcetera), which creates varying visitor behavior

- Competition differs across both platforms

- Google Experiments itself isn’t a sufficient ASO tool on its own, so in general, it won’t reveal enough insights that you can apply to iOS in the first place

This is a dangerous mistake because it’s so commonly recommended. More often than not, though, the people suggesting this approach are agencies that don’t have access to sophisticated testing technology. For that reason, they stick to their only available option, which is running a Google Play Store Listing Experiment, and then using the winning variation on iOS.

Sin #2 – Optimizing for the Wrong Audience

When running an app store test, it’s crucial that you segment your audience strategically in order to drive valuable results that will actually impact your company’s top and bottom lines. That said, many companies still optimize for the wrong audience.

Let’s examine two common mistakes:

- A well-known brand is featured in the top charts during the time they conducted a Google Store Listing Experiment. This means they received a significant amount of traffic from a broader, lower quality audience browsing the Google Play Store. In reality, this brand has a specific age and demographic group that provides them with greater lifetime value (LTV), but their high quality users were drowned out by all the installs coming from browse traffic. When the test concluded and a winner was declared, the results were skewed in favor of the variation that was most effective in converting the low quality audience. This led the company to update their Store Listing with an asset that was optimized for an audience that isn’t their core, high value user group.

- A mobile brand runs a test in a sandbox environment and samples users only from a low-quality ad network since they’re less expensive to acquire and drive to the test. After the test concludes, the brand employs the winning variant in the store only to find out they optimized their assets to convert low-quality users and most likely lowered the conversion for their high-quality traffic.

When this happens, it’s difficult to justify investing resources into app store testing because top level stakeholders don’t see it impacting company-wide KPIs or sufficiently moving the needle.

Instead, companies should perform extensive research into the audiences that provide them with the highest value [e.g., lifetime value (LTV), retention, etcetera] and ensure their test sample is representative of that audience.

Sin #3 – Measuring Results in an Invalid Way

Even if your test is run flawlessly, it’s easy to make the mistake of measuring results using a model that’s too simplistic.

For example, some companies use pre and post-test analyses that calculate the average CVR two weeks before the change and compare it to the average CVR after the change.

This approach fails to take into account many variables that affect conversion and app units in the store, such as keyword ranking, overall and category ranking, being featured, the level of user acquisition (UA) spending, competitors’ changes (e.g., creative updates, UA spending, etcetera), and more. It’s crucial to develop the right modeling methods that enable the creation of a true baseline, which takes different fluctuations into account, in order to measure the real impact of any changes.

Without accurately measuring the impact, companies can arrive at the wrong conclusions: that creative changes don’t matter, or that conversion rates were hurt when, in reality, they experienced a positive uplift.

Sin #4 – Testing without Direction

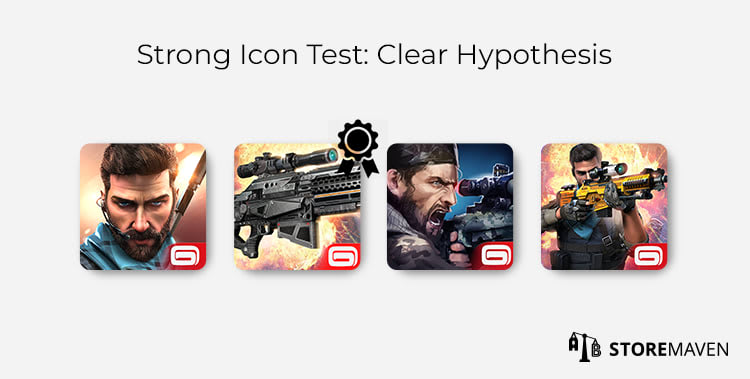

Some marketers are under intense pressure to run as many tests as they can, as quickly as possible. This usually means they don’t take the time to conduct proper research and develop strong hypotheses to test. It’s easy to test minor or subtle changes that won’t end up making an impact, and using weak hypotheses makes it hard to uncover actionable insights that can be used in subsequent tests.

For example, a marketer may run an Icon test in which each variation showcases the same character, in the same position, with the same facial expression. The only distinction is that he is holding game weapons in different hands. Even if a winning variation is found, the designs themselves are too similar to extract any valuable insights, and there’s no clear direction or hypothesis driving the test.

It would be more effective to run an Icon test in which the variations tested character only, gameplay element only, and combination of character and gameplay element to get a clear winner, as you can see in the example above.

Hypotheses are the pillars of every ASO test you run. Make sure you use them to your advantage to pinpoint which specific features, characters, or elements of your app or game are most important to installers. The consequence of not investing enough time and effort into developing a well-researched and long-term ASO strategy is that test success rates tend to be much lower (and the Control Version will keep on winning).

Sin #5 – Concluding Tests Prematurely

Another common thread among tests that fail is that they are usually closed too early. There are many external factors that can impact installs, and ending tests prematurely doesn’t allow you to account for these factors.

For example, let’s say a mobile game’s highest quality audience is more active on the weekend than in the middle of the week. If a test is concluded mid-week after only 4 or 5 days of receiving traffic, then there won’t be enough samples or data coming from their target users to generate accurate results. This means a wrong or misleading conclusion might be accepted, and the company will miss out on uncovering key insights to optimize for their high value audience.

Additionally, it’s rare to see a variation that is the winner every single day of the test. You’ll want a wider date range to give you the confidence of consistency. We recommend running tests for a minimum of 7 days and typically see them run for an average of 10 days.

Sin #6 – Not Updating the App Stores Promptly

In some cases, mobile marketing teams that invest a lot of time and effort running great tests are slow to update the store with winning creatives and messaging. If a company finds better-converting creatives in January but only implements them in April or May, for example, then the audience they originally optimized for has already changed.

Your audience preferences and tastes change rapidly with trends. Similarly, your competitors continuously update the creatives and messaging on their stores, thus changing the content that your audience interacts with when looking for a new app or game.

Failing to update your app store page in a timely manner will make it much more difficult to see a positive increase in conversion rates.

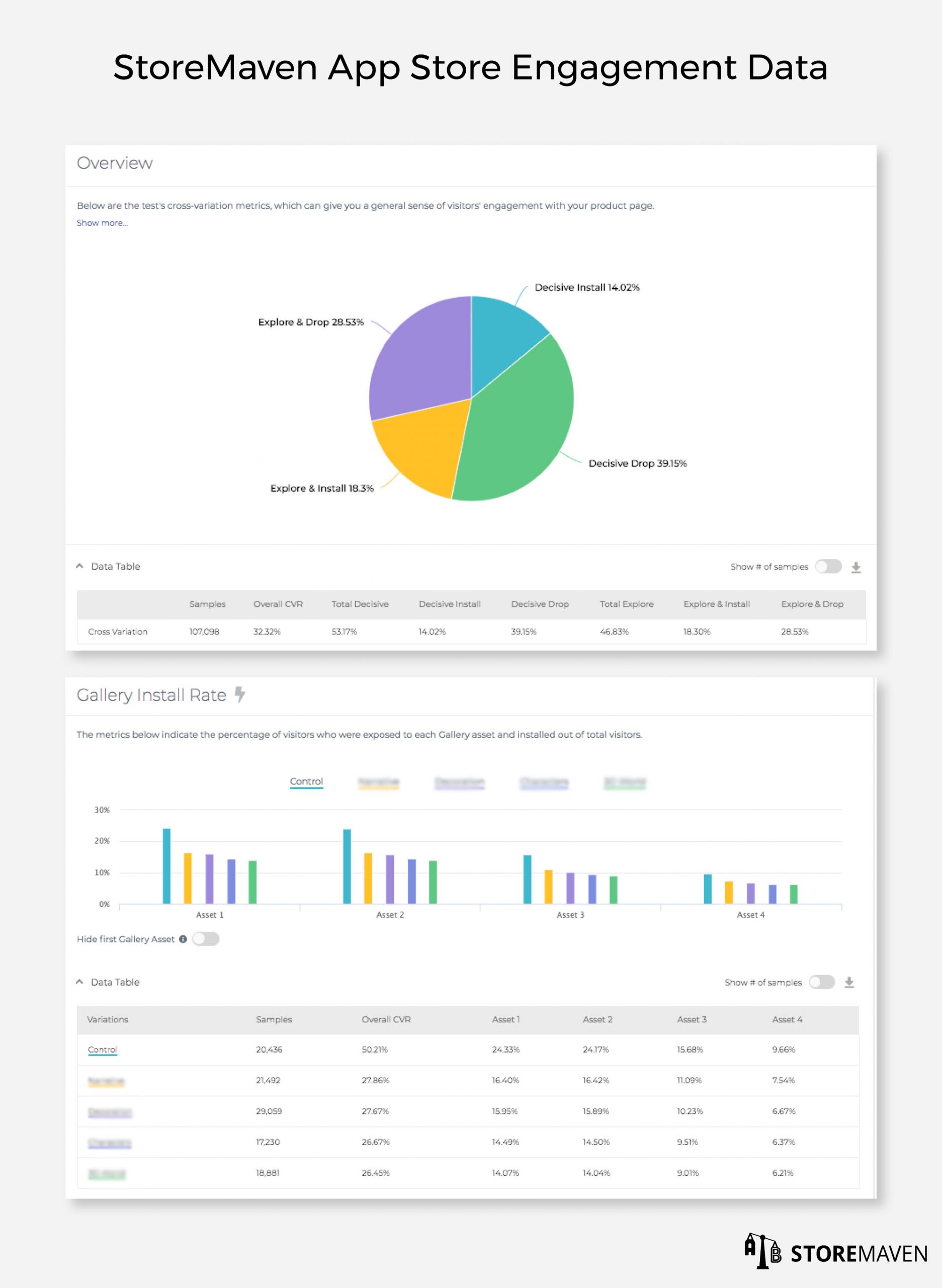

Sin #7 – Not Receiving (or Analyzing) App Store Engagement Data

The last sin is one of the most important mistakes to avoid. App store behavior data, as you can see in the example above, reveal important insights such as how engaged your visitors were, how they behaved on your page, which messages convinced them to install, how long they spent interacting with different assets, and more. Most importantly, though, this type of data helps you uncover why a certain variation won. Without these metrics (for instance, if you use Google Experiments and only have CVR data), it’s difficult (if not impossible) to maintain an ongoing CVR optimization loop.

For example, let’s assume you ran an Icon test and managed to find a better converting variation that highlights a well-known character. You implement the results and start to plan your next test without understanding why that variant won and how it impacted the way visitors behaved on your page.

Little did you know that it won because it encouraged visitors to explore your page, thus exposing them to Screenshots 3-5. These Screenshots completed the experience for the installers because they showcased that same character and got visitors excited about the game.

Without knowing exactly why one variant performed better than another, you run the risk of conducting a test on the latter screenshots and removing a significant part of why the Icon worked in the first place. This makes it difficult to drive improvement and tends to lead to a negative growth pattern in which you take one step forward just to take two steps back.

The Outcome of These Deadly Sins

Mobile brands that are successful in the App Store and Google Play have intimate knowledge about what their audience wants, how they arrive at decisions, and what triggers them emotionally and socially. The top tool in their toolbox that enables this success is app store testing. Unfortunately, mobile publishers that engage in one or more of the deadly sins I mentioned above will arrive at the incorrect conclusion that testing doesn’t work or isn’t for them.

This conclusion has more (negative) strategic implications than they may realize. It gives their competitors an unfair advantage as they continue to stay one step ahead and adapt to the changing needs and wants of their audience through continuous testing. This also means their competitors will enjoy higher conversion, higher growth rates, and lower user acquisition costs.

To regain your competitive advantage and ensure sustainable success, make sure you take full advantage of the benefits that app store testing can provide and avoid these deadly sins.

—

StoreMaven is the leading app store optimization (ASO) platform that helps global mobile publishers like Google, Zynga, and Uber test their Apple App Store and Google Play Store marketing assets and understand visitor behavior. If you’re interested in testing your creatives, schedule a demo with us.