There’s ASO testing, and then there’s ASO testing with audience segmentation.

You can do the former, but if you’re going to do that you might as well do the latter. Because the latter is where you’ll get the real data you need.

We know not all installs are created equal. It’s about quality. And value. Those are the installs that lead to real growth: retention of high lifetime value users.

Yes, any broad ASO testing can get you the basic results on which creatives best convert; creative asset combination A beats creative asset combination B, etc. With this mindset, you’d simply run a test, input the winning creative asset combination in the live app store and sit back, put your feet up and watch those installs come pouring in. If only. We all know the rea ahlity is not like that. Installs don’t just come pouring in. You can’t sit back and relax. And you can’t simply input the winning creatives and then stop. Optimization isn’t like that. Optimization is a constant uphill battle and the only way to get to the top first (well, there is no hard top, but that’s another blog for another day) is to be better than your competitors at conversion. And the only way to improve conversions is to get better at knowing what your highest-prized users want — and what they want to hear. The marketer that best gets inside the heads of their target audience is the marketer that can sell ice to Eskimos.

But how can you know what Eskimos think about your app store?

The only information you get from App Store Connect is the sum total of users taking each journey to the store before installing. Were they referral or organic? And if organic, browse or search? They tell you the split of total users who installed or dropped, nothing more nothing less. We can do that too. As well as this:

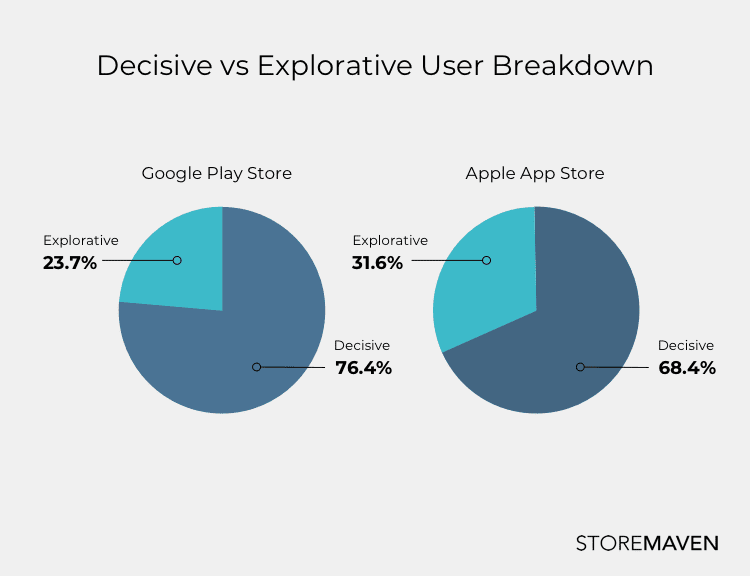

You can see that in both stores decisiveness is by far the greater characteristic of users than the urge to explore. For these users, decisions are made (not instantly but simply) based on the First Impression without the need to search for more information. What decisive users see when they first open your page is enough. They have made up their mind. They will either drop or install. The why of their behavior will remain a mystery, we cannot track their eyes and see what they look at and see what they read.

But there is a way to know more.

Our testing allows you to get inside their heads. What impacts decisiveness can be discovered by iterating asset by asset through the First Impression. We can divide beyond the overall data that Apple and Google provide and give you real insights into how your users behave. Once you know how they behave it’s just a hop, skip and a jump before you can work out how they think.

What is an audience actually?

Ah, audiences, the holy grail of generalizations and categorizations that allow us to understand the world, to understand our politics, our communities and, yes, our businesses and our users. It’s how humans have been making sense of the world since hunter-gatherer days. We group others by common traits, backgrounds, behaviors and origins. We then make a whole lot of assumptions and stereotypes based on those factors and use them to predict and analyze their behavior and how we should interact with them.

So too with our business, we just use data. The more we can segment users into distinct groups, the easier they are to understand. And once we can understand them, then we can cater to them and their every whim (well, maybe not every, but the ones we think are worth encouraging).

In our industry, audiences can be looked at in two ways (and often are). Audiences can be defined demographically by age, gender, location, interests etc., (a classical marketing definition) or by traffic sources and the journey they took to get to your store page (a classical User Acquisition definition).

The real insights lie when you can combine and segment both audience definitions together and see trends in behaviors among these segmented groups.

What behavior can we look at?

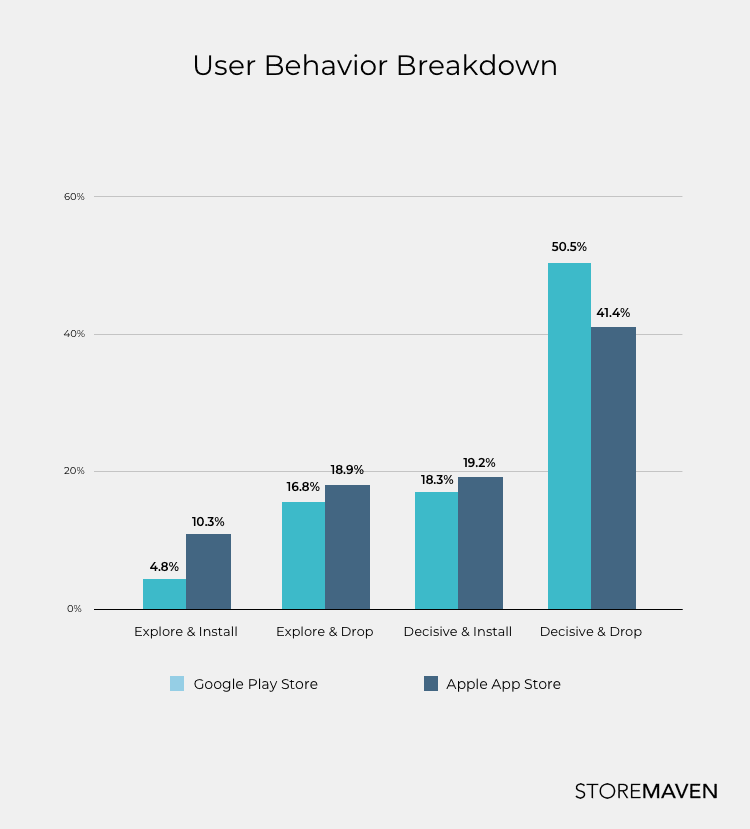

On the most basic level, we divide users by outcome (install versus drop) and by how they came to their decision (decisive versus explorative).

Depending on the app store, which assets are visible in the First Impression is different. The layout of Google Play creates an environment that facilitates more decisiveness. iOS has a greater explore rate. Now our testing can track two variables: time on page and exploration activities (and time spent on each exploration activity). We can track how many screenshots a specific user swiped through. Did they read reviews? Which ones? How many? Which assets was the user exposed to and for how long? And vitally, what was the last thing they did before converting or dropping? This can be done for each and every user.

So where do the audiences come into play?

The fact that during testing we can track each individual user’s behavior and link that back to where they came from (traffic source) and who they are (demographic targeting) means the (explorative or decisive) activity can be linked to specific general audiences – uncovering commonalities and trends amongst your user segmentation. This is huge. But why?

Imagine you run a test and it results in creative asset combination A beating creative asset combination B. Great.

But what if you then segment the data further?

Let’s say that creative asset combination A converted highest overall with the most decisive installers to boot but creative asset combination B converted highest among explorative users. What does this mean?

There is a difference between what the decisive installers saw and what the explorative users saw. Explorative users were exposed to messaging that the first group were not.

Now, what if we could tell you which users were more likely to explore and which users weren’t and link it back to which are your highest-valued installers? You could then create and test different messaging based on who you know is more likely to be exposed to the different creative assets that make up the store page.

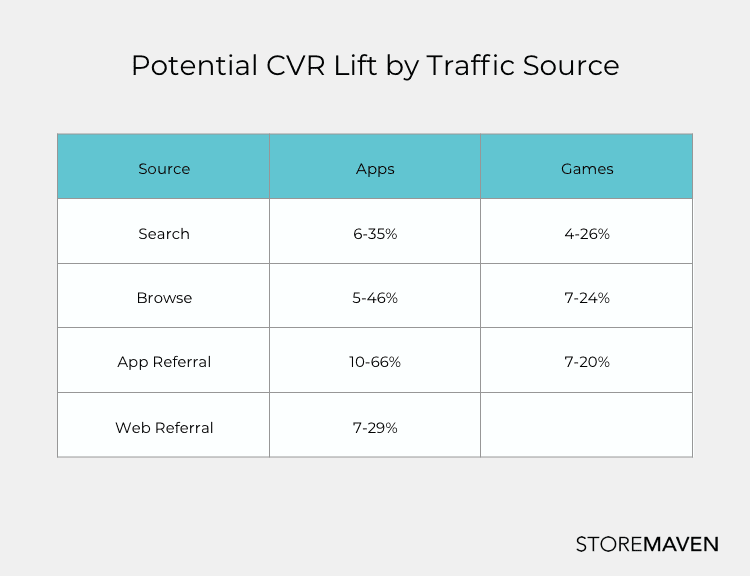

Over the years, we have also discovered the potential conversion rate uplift that asset optimization from different traffic sources can bring about. By understanding which users are most likely to see which messages (and which of those messages convert best), you have uncovered the golden ticket.

The testing funnel is simple. Specific demographic audiences are targeted with adverts on Facebook (we have found that it can be used to imitate organic users as well as paid traffic channels). After users click the ad, they are lead to the sandbox app store testing page. Their activity in the testing page can then be monitored and traced back to the original targeted audience creating a direct link between activity and the breakdown of the user who performs that activity.

This means you can see trends on specific actions taken by specific audiences and run specific tests to see which messaging and which asset combinations convert the highest for each audience segment. You can learn about user preferences by knowing which users respond to which messages. As an example, if you have a travel app and notice that your boomer audience explores at a higher rate than millennials you can create messaging optimized for those boomers on the latter screenshots (beyond the First Impression). You already know that millennials are less likely to see them so it won’t hurt their conversion rate, but will significantly affect the conversion rate of that boomer audience who you know are exploring there.

One app page store. One set of creatives. Innumerable insights.

There are two immutable facts in the app stores, 1) you can only have one set of creatives and 2) you only get one place to say it. So what’s the point? The ultimate goal of every marketer and product developer is to understand the needs of their users and the best way to get to know them is via thorough audience-segmented testing.

It offers the ability to create messaging combinations optimized for specific user journeys that won’t affect other users (e.g. messaging that converts explorative users can be placed where only they will see it).

It provides insights into how different demographic audiences behave and respond. You can use these insights to start exploring how to target and convert audiences beyond your core. You can use this understanding to see how far from your core messaging you can go before you start negatively impacting your conversions. You can optimize your messaging for the broadest audiences. You can target specific audiences where you’ll know you’ll find them. You can leverage these insights to align with your general marketing efforts: for the quarter, for the year, for long-term growth. The more you know, the more you can do, and the more you can do, the more you can achieve.

Testing is the surest way to stop assuming and start knowing.