The most common question I get from our new customers is “Where do I start A/B testing App Store pages with Storemaven, and how does that fit into our App Store Optimization flow?”

App Store Optimization (ASO) is a world of endless options & variables to analyze and discover on your way to the best converting app page. A successful ASO strategy is not simply a matter of testing a variety of creatives to find the variation with the highest install rates – you have to consider the quality of installing users. Instead of just focusing on the raw numbers of users downloading your app, you want to make sure you’re pulling in users who will actually lift your KPIs.

So before you jump in, let’s define the objectives of your first tests:

- Measuring the impact of different marketing messages and creatives

- Finding areas with missed potential in your app page

- Learning how different variables affect your install rate (CVR)

Accomplishing these objectives at the very beginning will not only increase your CVR, but it will help shape your whole ASO strategy.

So behold… My top 5 Maven tips to get you ready for killing your KPIs

1. Testing only one element at a time will help you gain more focused learnings.

It takes time and testing in order to study each element’s impact on your app page. While it may be tempting to test everything all at once, you’ll ultimately slow down the process by missing out on understanding why users responded to each variable the way they did.

Keep all elements exactly the same except for the one that you are testing, for example:

- Different Icon in each variation

- Different First Gallery Image in each variation

- Different Featured Graphic in each variation

and so on….

Following this rule will help you reach the clearest, most accurate and significant test results by showing you exactly what impacts your conversion in each test.

2. Start with a Screenshot or Icon test on iOS. On Google Play, focus on the Featured Graphic or Icon.

These are the most impactful elements in you app page and for most users will determine whether they install your app. Testing these elements will lead to more significant results, since users generally pay the most attention to these creatives.

3. Make differences between variations DISTINCT.

Subtle changes between elements generally have minimal impact on your users and don’t yield insights into what messaging your users really respond to. You want to focus on creatives that are dramatically different to learn the most and make significant improvements to your CVR.

Here is a short example that demonstrates this notion when testing icons:

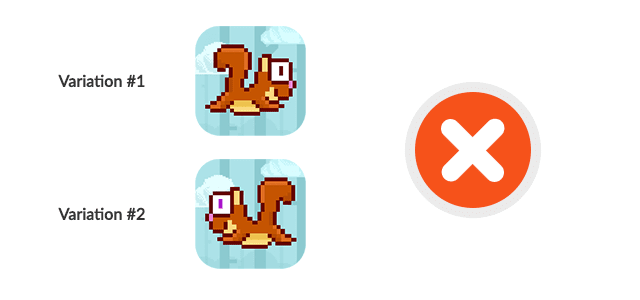

Test A:

Test B:

In Test A, changing the direction of the character & the small color changes of its eye won’t have a major effect as the message remains identical in both tested variations.

You wouldn’t expect users to react (significantly) differently to each icon as they are practically identical.

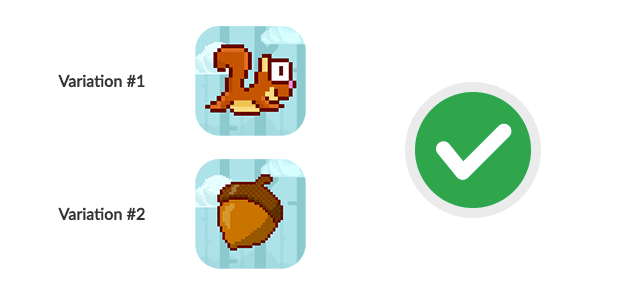

In test B, the competing variations deliver an entirely different message:

Variation #1:

A character-focused icon generally has stronger instant appeal. Users who like the character will give the game a chance

Variation #2:

A more generic version showing a gameplay feature usually drives more exploration for users who want to understand how this element plays into the game, and what kind of game they are looking at

4. Start testing with 2-3 variations in a test.

Multiplicity might be confusing at first. I recommend creating 2-3 variations with dramatic differences to keep the traffic under control and the results clearer. Once you run a few tests, you have a better understanding of how much traffic is required for each variation, and can budget accordingly.

5. It is highly recommended to run tests for at least 5-7 days, with a consistent traffic volume each day.

This is important!

It’s better to spread your traffic budget across multiple days rather than driving a large volume of traffic in one.

From all the hundreds of tests I’ve analyzed and reviewed, balanced traffic volume through the lifecycle of a test emphasizes trends, and the true progress and performance of each variation over time becomes noticeable and reliable.

The amount of traffic needed to reach a significant conclusion depends on many variables, such as: traffic channels, ad creatives, targeted countries, conversion rates, test type & tested elements on each app page.

When properly testing, app stores with higher CVRs (over 30%) need a few hundred users in each variation. These hundreds can climb up to ~1500 – 2000 users per variation when talking about lower CVRs (less than 5%) and traffic channels with lower conversions.

StoreMaven will reduce the costs and volume of traffic needed to reach significance by using StoreIQ™ – our unique implementation of machine-learning algorithms, as well as by assisting you in creating strong tests.

Expectations from test results

The more distinct the differences are between tested elements, the more likely you are to find a larger gap between variations and conclude your tests with less traffic & higher confidence levels.

The same goes for testing more impactful elements –

In most cases, testing your Screenshot, Icon, Poster Frame, Feature Graphic, and Video will yield more significant results than testing your full Description, Ratings, and number of downloads.

You can expect a CVR lift up to 25% (and even more!), and truly meaningful results both on the App Store and Google Play when testing your elements properly and creating variations with content that reflects different messages.

Happy Testing !