As a mobile marketer, you have one main goal: acquire the most qualified users, profitably.

Whereas previously you may have updated your app store assets based on research (and a little instinct) and then waited to see if your new creatives improved conversion (a similar strategy to throwing spaghetti against a wall and seeing what sticks), it’s near impossible to replicate success when you don’t know why you were successful in the first place. Alternatively, if you didn’t achieve success, it’s similarly difficult to pinpoint why something didn’t work or how you can improve next time if you don’t have concrete data to support your assumptions.

This is where app store optimization (ASO) comes in. In the last few years, ASO has evolved from a simple, sometimes forgotten task to one of the main strategies companies implement to achieve their mobile marketing goals. At its core, it’s one of the most effective, data-driven methods to increase the conversion rates (CVR) of quality users while decreasing cost per install (CPI).

One pillar of ASO involves the optimization of your keywords, and the other involves creative optimization. Within creative optimization, there are a variety of assets you can test—app icon, screenshot gallery, videos, description, etc. However, given the amount of research, preparation, and analysis that goes into each test, it’s easy to fall into the trap of running tests that don’t reveal actionable insights or won’t propel you forward in meeting your KPIs.

For that reason, we’ve assembled the ideal recipe for an effective ASO test based on our experience working with global mobile developers.

1) Strong Hypotheses

Developing a strong hypothesis is the foundation of effective ASO testing. Hypotheses drive the direction of every test, and without one, you risk designing and running a test that won’t uncover relevant or valuable information.

Before you develop hypotheses, though, you must first gain a deep understanding of your industry’s landscape. This involves both the target audience and competitive research.

Audience Research

One of the reasons many tests don’t extract as much value as they could is because companies don’t strategically decide which audiences to test. They focus too much on increasing conversion without paying attention to who they’re actually converting. If your targeting is too broad, you may convert users who don’t provide lifetime value (LTV). The key is to identify which users are contributing to the highest LTV and segment them based on factors such as:

- Age

- Location

- Gender

- Interests

Given this initial segmentation, you can better analyze the effectiveness of your ASO tests based on whether or not you successfully converted your quality users.

Competitive Research

The next step is to research direct competitors in all your major markets.

What do their app store pages look like? How are they differentiating themselves from others in your category? What design styles and general trends (e.g., gallery orientation, video vs no video, etc.) do you notice? How do they change their messaging or creatives by geo? How do they rank (both overall and by category) compared to you?

In addition to product pages, there’s value in seeing how competitors look within organic search and browse contexts (e.g., search results, top charts, etc.), especially if most of your installs come from organic channels. This allows you to put yourself in the mindset of searching and browsing visitors and can help you strategize how to make your app or game stand out in these contexts.

In general, you should be familiar with what other apps or games in your category are doing, how they use their app store assets to convey their value propositions, and where there’s an opportunity to differentiate yourself. Tools like the ASO Tool Box and AppFollow can assist you in this research quickly and effectively.

Develop Hypotheses

Based on your audience, competitive, and internal research (e.g., reading reviews to see what current users love or dislike, etc.), you’re better positioned to develop strong and informed hypotheses. Hypotheses should pose a clear question of what you’re trying to answer in your test(s), and they enable you to identify the best way to market your app or game in terms of messaging, creative execution, or a combination of both. Hypothesis building is a critical step in ASO testing, so make sure you invest enough time and thought into creating them so you extract the full value out of your research and tests.

The key to a strong hypothesis is that it tests distinct elements and is framed in a way that will reveal something interesting about your users. Here are some examples:

Lifestyle App

- Visitors prefer to see the experience of using the app versus specific features. If you’re a travel app, for example, this could mean using an image of a person travelling instead of highlighting the booking feature within the app.

- Visitors are more sensitive to elements of social proof (e.g., best app of the year, recommended by experts, etc.) than learning about the app’s functionality.

Mobile Game

- Visitors will respond more to seeing the game’s narrative and characters compared to specific gameplay elements.

- Visitors are more interested in one game feature over another. For example, they will be more interested in the player versus player (PvP) social feature than the battle elements of the game.

Hypotheses will vary based on the app or game but in every case, you will want to make sure the hypotheses are distinct enough so there’s a clear difference in performance. This will also enable you to isolate variables within the test. If you test too many variables, for example, you’re unable to pinpoint which specific elements had the most impact. On the other hand, if you make changes that are too subtle or insignificant (e.g., changing the icon’s background color, changing the color of a character’s outfit, etc.), you might not see an impact and, again, won’t extract value out of the test.

When a test concludes, you want to confidently answer the question regarding what made the difference to visitors and what drove conversion, whether it was an important feature to highlight, an emotion to evoke or a character to showcase.

2) App Store Creatives

You’ve done the research and developed your hypotheses, so now you need to identify which creatives to test and how to design them so they bring you closer to achieving your business goals.

Step 1: Identify the App Store Creatives to Test

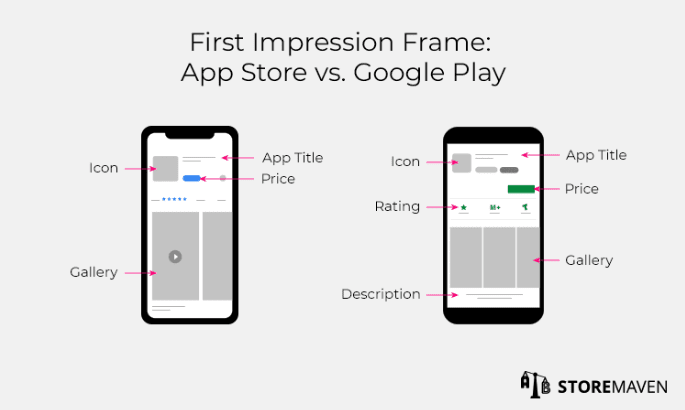

We’ve found that the First Impression frame (everything above the fold) matters most. You have roughly 3 seconds to capture visitors’ attention before they decide to either install or drop from the page, so it’s imperative that the assets presented there are compelling. And it pays off. Based on analyses of tests conducted on our platform, an optimized First Impression can increase conversion by up to 26%.

To start, you should identify the most dominant marketing assets in the First Impression Frame of each app store platform:

- On iOS, you can focus on the Gallery assets (i.e., Screenshots and/or Videos) or Icon

- On Google Play, given the Play Store redesign, you can focus on the Icon, Video thumbnail, Video presence in general (since they don’t autoplay like on iOS), and Screenshots

On both platforms, the Icon plays an important role throughout the visitor journey as it’s the only visual element that appears when they browse through top charts, find your app/game in the Search Results, or land on your full Product Page. We’ve also found that it’s an effective asset to keep visitors engaged. For more best practices on icon testing here is our App Icons Testing Guide.

Alternatively, the Gallery is one of the most impactful creatives on both the App Store and Google Play as it’s placed above the fold and occupies a majority of the page’s real estate. Our data also supports optimizing the Screenshots in your Gallery, as it can lift CVR by up to 28%. If you’re interested in optimizing your Gallery, you check out our guide on App store screenshot best practices.

Overall, the key is to think about your unique mix of visitors. If you already know how visitors engage with your page, where they come from, what assets they’re typically exposed to, etc., you’ll have a better idea about which creatives would make the most impact to test first.

Step 2: Choose Your Design Style

Based on the creatives you choose to test, the next challenge is identifying the most effective way to connect your hypotheses with design. If one of your hypotheses posits that visitors prefer to see the emotional experience of using the app versus specific features, then the creatives of each variation should bring that statement to life.

It may seem straightforward, but it’s easy to design tests that don’t lead to actionable results, especially if your variations are too similar in design (e.g., just changing the background color). To ensure your variations will reveal actionable and valuable insights, keep our design strategies in mind.

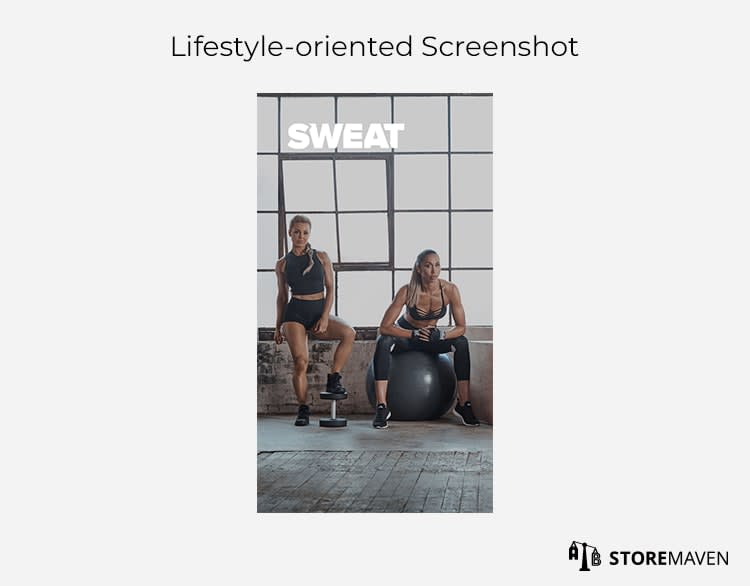

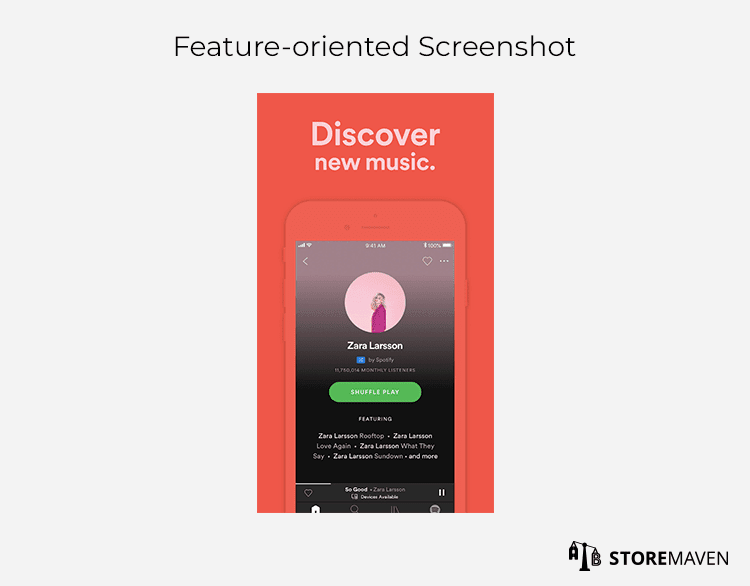

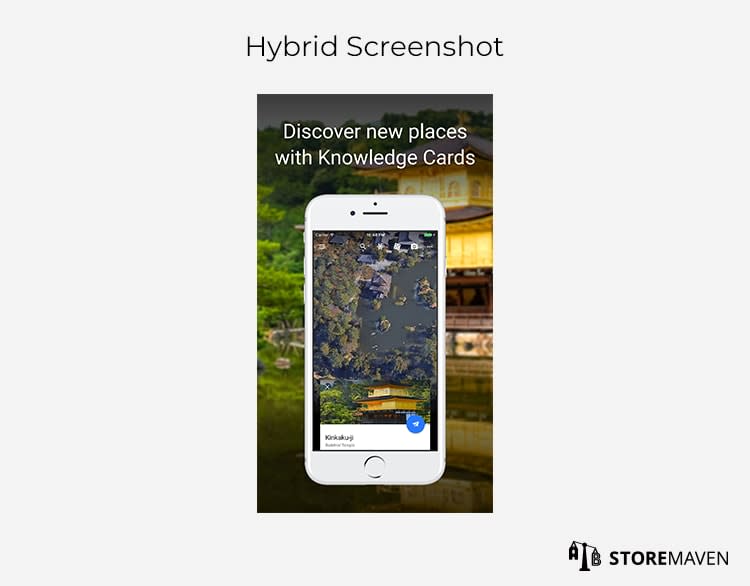

For apps, there are three main design styles to consider, and each has its unique advantages depending on what you want to highlight to potential installers:

Lifestyle-oriented Screenshots use real-world images or visuals to develop an emotional connection with visitors. If your app UI isn’t a strong draw to your app, we recommend testing this design style. As you can see in the example above, the SWEAT fitness app uses a lifestyle Screenshot showcasing an aspirational image of fitness instructors to drive more conversion from users motivated to get into shape.

Feature-oriented Screenshots showcase real screenshots of the app to highlight different features or use cases. This design style can be effective if your UI is a unique differentiator in your category. Spotify uses this design style in their Gallery to highlight different app benefits, such as the ability to discover new music on its platform.

Hybrid Screenshots are a combination of the two above design styles. This option gives you the freedom to showcase an emotionally appealing image in conjunction with app UI so you have the best of both worlds. You can see how Google Earth uses this technique to showcase app UI against the backdrop of a place users can explore within the app.

For games, we’ve identified five different design styles:

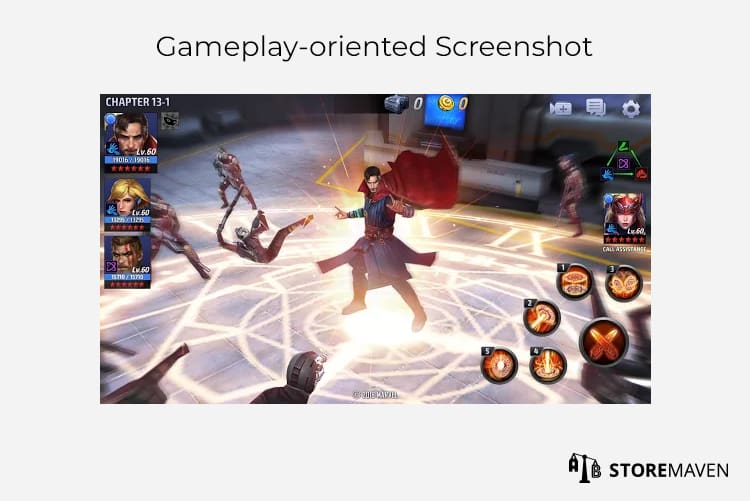

Gameplay-oriented Screenshots highlight tactical gameplay to show how the game works. This style tends to attract hardcore gamers who make install decisions based on the production value and quality of game graphics. MARVEL Future Fight highlights direct gameplay in one of their Screenshots so gamers get a glimpse of what it’s like to play the game.

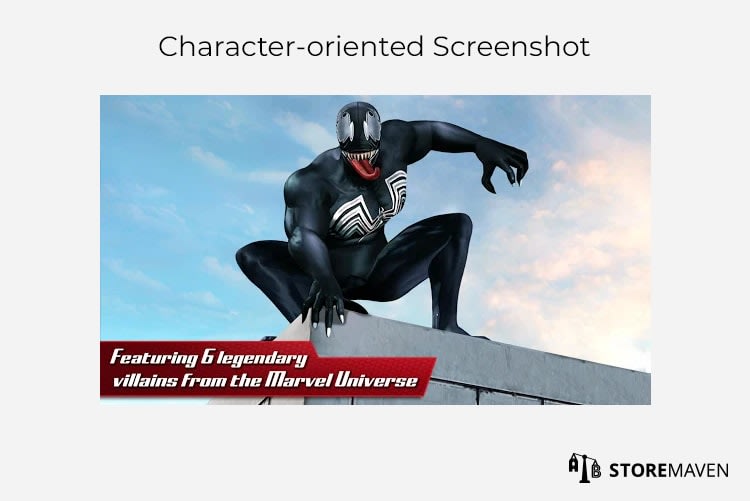

Character-oriented Screenshots put the emphasis on characters within the game. This is most effective for games with strong IP and brand recognition, or games whose unique characters drive most of the appeal. In the example above, the developers are capitalizing on the popularity (and notoriety) of villains in the Marvel Universe to attract players.

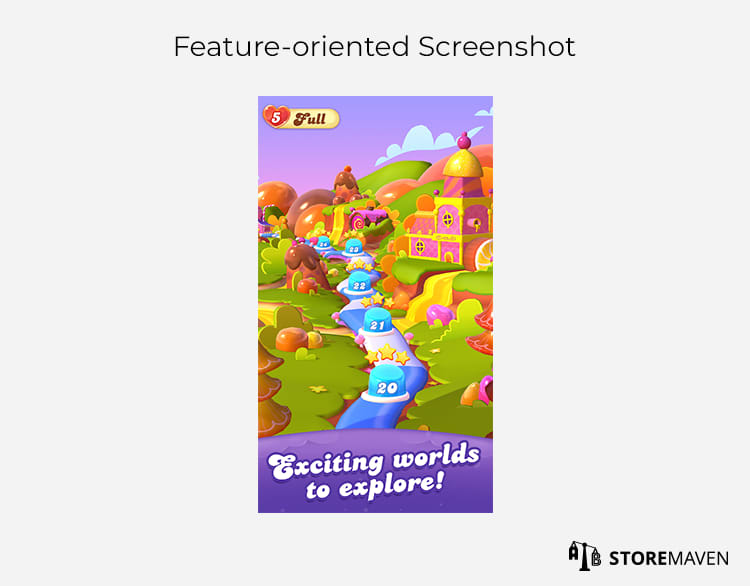

Feature-oriented Screenshots are used to showcase specific features of the game, such as the ability to team up with players worldwide, battle bosses, or unlock new characters. If your unique selling proposition relates to the storyline or certain characteristics of the game, you should test this design style. In the example above, Candy Crush Friends Saga highlights users’ ability to explore new worlds as a way to appeal to new users.

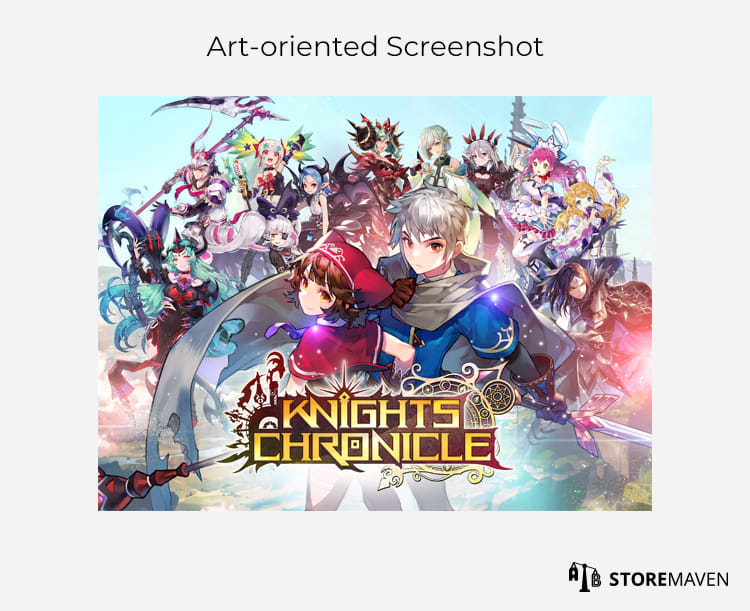

Art-oriented Screenshots are less common but used if your target users are interested in a more artistic representation of your game or gameplay. These images are not direct screen captures, but they artistically show the assets, characters, or gameplay using high-res and high-quality graphics. Knights Chronicle uses an art-oriented Screenshot to showcase the anime-style characters and to create a more interesting portrayal of their game.

Similar to Hybrid Screenshots for apps, this design style represents a combination of any of the above design styles. In the Harry Potter: Hogwarts Mystery game, the developers use this hybrid design style to showcase a well-known character and a unique game feature (i.e., mastering spells and potions) to captivate a wider audience.

Bonus step: Localize Your Creatives for a Global Audience

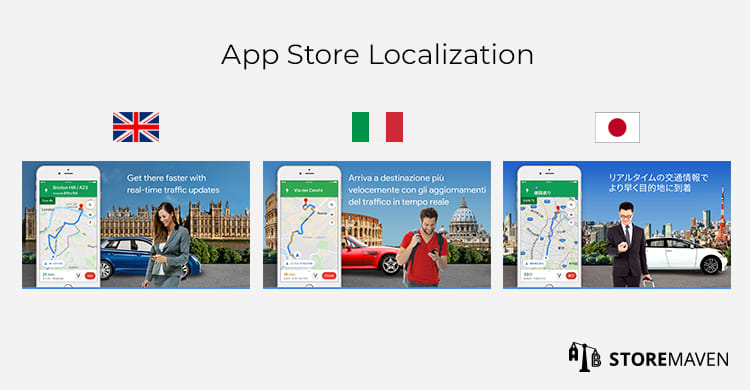

Another creative consideration is dependent on whether your app or game is available globally. If you’re targeting a variety of markets, you can localize or culturalize your creatives, which means adapting your app store assets to the language and culture of different regions.

To do so, you must conduct the same comprehensive level of competitive and audience research per GEO that you completed for your primary market. You should also develop entirely new hypotheses by location, as many markets differ in factors such as their interests, preferences, price-sensitivity, motivations, etc.

There are different ways you can do this based on your resources. On one hand, you can focus only on translating your Product Page to different languages. If you’d like to take it a step further, you can create a custom app store per GEO and tailor the overall messaging and design to each region’s unique values and beliefs. Google Maps, in the example above, has a unique way of doing both. The Gallery assets are translated, and they use varying images to showcase landmarks and streets that are unique to that market.

In a majority of tests we’ve run with both a translated app store variation and a culturalized app store variation, the culturalized variation won. This shows there is an even higher CVR potential for culturalizing app stores. Despite the benefits, many companies aren’t doing this. This presents a unique opportunity for you to expand your app’s global reach and distribution potential by making your app/game more relevant to locals in different countries.

3) Traffic Sources

Once you’ve solidified your creative design, you’ll need to define the right mix of traffic to send to each variation. The key is to make sure you’re sending audiences that most closely resemble the high-quality segment of users you identified previously.

Within this representative audience, you should segment these target users even further (by intent, demographics, etc.) to effectively assess the positive and negative impact each variation has on different quality user groups. This will help you make more informed decisions about which variation to move forward with depending on the users it’s successful in converting.

For example, traffic coming from two different sources can lead to varying results. At the end of the test, it’s important to analyze how each variation impacted those two segments separately. If you find a certain variation has harmed conversion of your highest LTV users, it might not be worth the conversion uplift you saw from a different paid traffic segment. Along the same vein, if your main audience is female, you want to ensure the variation you upload to the live store still converts well amongst female audiences.

At the end of the day, you only have one Product Page that a variety of audiences will see. You want to strike the right balance between maintaining high conversion among your highest quality users without significantly harming performance of other audiences.

4) The Test

Now that you’ve developed your hypotheses, prepared the creatives, and identified the traffic blend you plan to send to each variation, it’s time to start running the test.

When conducting a test, it’s important that you leave it open for an adequate amount of time. Closing a test prematurely, especially if you’re working with Google Play Store Listing Experiments, is a common mistake that can lead to inaccurate results. There are a variety of external factors, such as seasonality, that can impact installs, so ending tests prematurely doesn’t allow you to take these factors into account. Using more sophisticated testing platforms ensures tests are run long enough to achieve high confidence in the results.

Here are some of our best practices:

- Run the test for a minimum of 7 days to get data on every day of the week, especially since weekdays and weekends can yield different visitor behavior. Depending on the testing platform you use, it should automatically keep tests running long enough to ensure adequate data is captured. For example, our proprietary, machine-learning algorithm, StoreIQTM, monitors and closes underperforming variations faster while requiring less traffic to be sent to the test, which subsequently saves you time and money on your testing efforts.

- Make sure you send sufficient and consistent traffic volume throughout the test.

- Don’t mix GEOs—each GEO is unique in its own way, which is why we recommend localizing your store and having different variations per GEO.

- Make sure you avoid these common ASO testing mistakes.

5) Analysis

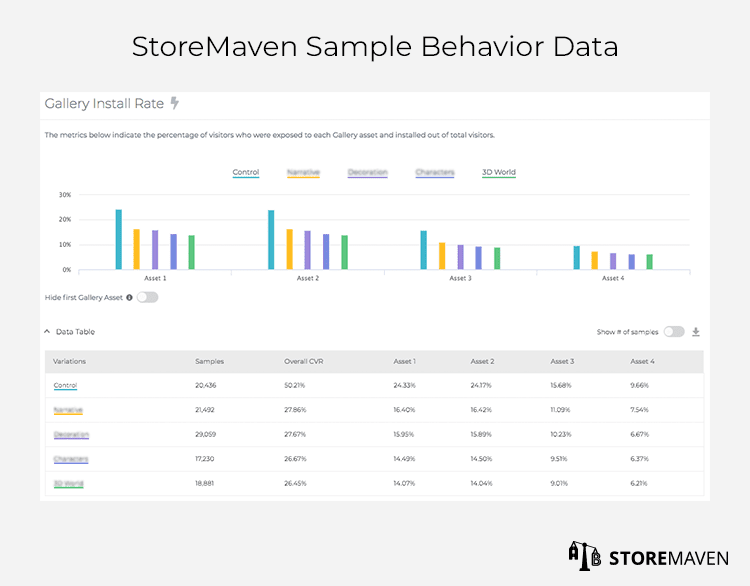

One of the most important, yet often neglected, aspects of ASO testing is the analysis. In general, developers and mobile marketers tend to look at conversion as the only metric for post-test analyses. However, in order to develop a sustainable ASO strategy, you need to understand not only which variation performed better but why it did. The only way to isolate these insights is to look at app store engagement data.

On Google Experiments, you can only see the CVR of each variation, which typically doesn’t provide enough context to fully understand why one variation converted better than another. If working with a sophisticated testing platform, you can also see:

- Funnel metrics that break down user behavior (e.g., what percentage of your visitors were decisive and made a decision solely on assets they saw above the fold versus visitors who explored and engaged with assets before making a decision)

- Engagement metrics (e.g., Gallery Scroll and Install Rate, Video Watch Rate, Video CVR, percentage of visitors who read your reviews, percentage of visitors who expanded your description, session time, etc.).

As you can see in the sample data above, testing platforms track metrics like the conversion of each Gallery asset so you can isolate the most impactful message(s).

We recommend starting the analysis by understanding your unique mix of visitors—how many were decisive and made a decision without scrolling through your app store assets, and how many chose to explore more? Once you have this breakdown, it’s important to dig into why this mix is happening.

For example, if you notice a high explore rate, does it mean your First Impression was effective in enticing visitors to explore your page and learn more? Or, does it mean that it confused visitors and triggered them to explore other assets to see what value your app or game provides? One way to attempt answering this is to look at engagement metrics:

- Are these exploring visitors watching your Video or scrolling the Gallery? If so, are they installing or dropping at a certain rate after being exposed to a specific message? Is there repetition within the assets that could be causing a drop?

As you can see, behavior metrics are required to tell a complete story as to how your audience perceived each variation, and which specific messages or creatives convinced them to install. This data will also help you develop a long-term testing strategy by providing insights on what you should test next, how you can develop iterations and design smarter tests moving forward, and, most importantly, whether the installers you attracted are high-quality or if you should shift the traffic blend in your UA efforts.

The point of testing is not just to find a winner or a loser, but to gain insights throughout the process that can drive your ASO strategy forward. This also means that if the Control wins, it shouldn’t be considered a “failed” test. Each test, no matter the outcome, will uncover actionable insights that you can use to inform future tests.

Make ASO a Key Part of Your Long-Term Mobile Marketing Strategy

One of the most important things to realize is that ASO is not just a one-time effort. Your ability to achieve long-term success relies on the implementation of a consistent testing strategy that uncovers unique insights about your users and drives meaningful results. This is the road to achieving your KPIs and competitive advantage.

Plus, the insights you gain from testing are applicable to your broader mobile marketing efforts. If you find that a specific Screenshot highlighting a new feature had the highest install rate, then you can test this same messaging in your paid UA ad campaigns to drive more conversion to your app store page. It’s a win-win in every case.

Have any questions about ASO or testing in general? Feel free to reach out to us at [email protected] or request a demo here.