Congratulations, you’ve reached part three of our Ultimate App Store Test Series, which means it’s time to drive over some traffic! This is an exciting moment — all of your hard work and dedication is about to be rewarded. But before you hit the gas, you need to go about traffic generation the right way.

There’s no shortcut – to get the results that will allow you to make actual decisions and meet KPIs, you need traffic to experience a successful test. But staying within our framework will help you decrease cost and find the best audience to reach the most conclusive results.

Check all other articles in our Ultimate App Store Test Series:

Part 1: Building Hypotheses

Part 2: Creative Design

Part 4: Analyzing Results

In this article, we’ll teach you how to drive the right traffic to your tests so that you can get accurate information without spending a fortune. Put your foot down, we’re off.

How does app store page a/b testing work?

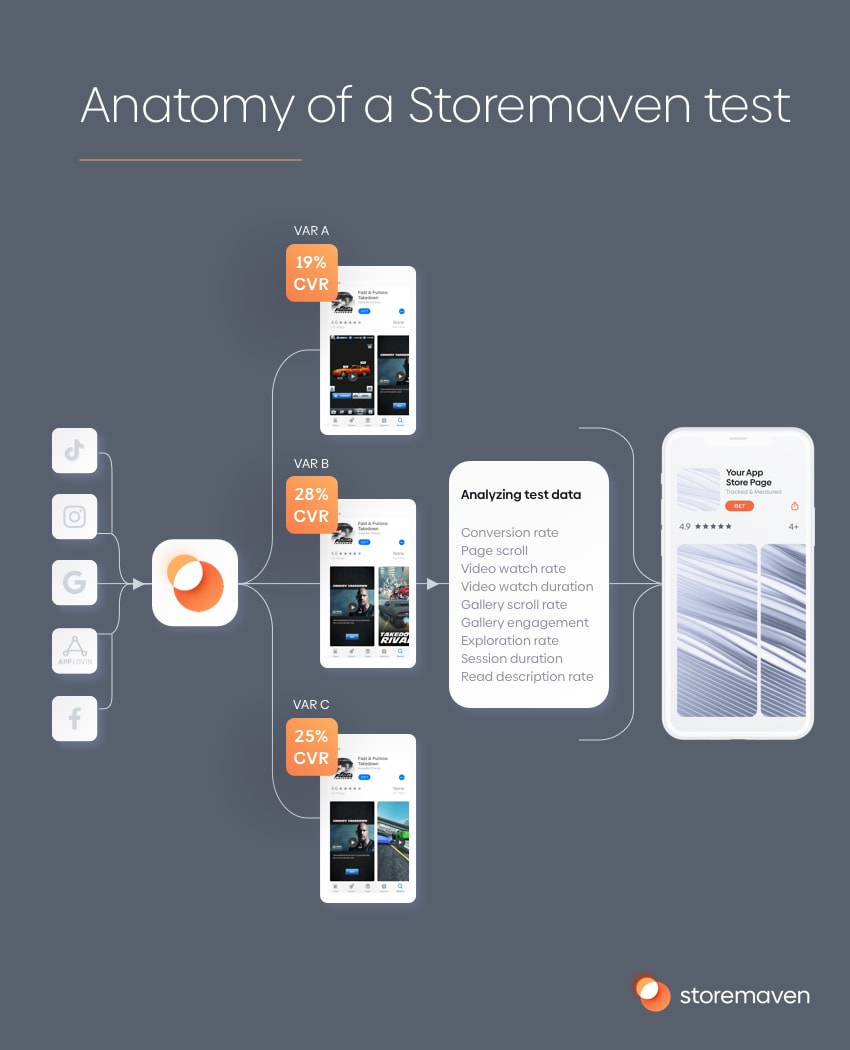

A quick refresher – app store testing involves creating a replicated mobile web environment that looks and feels like the real App Store, and also creating several variations of your app store page. As your replicated app store pages are mobile web pages, you need to drive a large enough sample to these pages to run a test.

With Storemaven, you’ll have a single link that will navigate users to your test, randomly and evenly distributing them to each variation.

This is done by using any paid user acquisition channel and running a test campaign. Instead of driving users to your actual app store page, you’ll use your test link, which will send users to your test app store page variations – NOT the real app store.

Only when users decide to install your app and hit the GET/Install button on the test page will they be redirected to your actual app store, once you get a chance to track, measure, and analyze their behavior and how they interact with your page.

Why is app store test traffic so important?

App store test traffic is key to having a successful test. As someone in mobile growth, UA, or ASO, your focus shouldn’t be the “average” user. Instead, you’re seeking an audience that’s worth much more to you and your brand; whether that’s an audience more likely to make in-app purchases, you can retain for a longer period of time, who registers for your service, etc. The end goal is to bring these kinds of people back to download your app.

That’s why you have to strategize your app store test traffic to make sure it represents the right audience for your app. Neglecting this part of the test will lead to misleading results, as you won’t be able to tell which creatives and messages convert more of the audience you care about, and won’t drive the KPIs that matter to you most. Setting your goals based on the wrong traffic can turn app store conversion rate into a double-edged sword.

Just think – if you put enough research and thought into your test traffic strategy and planning, you can rest assured that the results you’ll get, and the winning variation you’ll see will lead you to make changes in the app store that’ll drive higher installs for the audiences you care about.

How do you choose which traffic you want the app store test to optimize for?

In order to choose which traffic to optimize for, you need to take two main things into consideration:

A. Your overall growth strategy

Many times, your company or app will have a strategic goal that the entire team aligns with. For example, a dating app that now focuses on converting more women than men because their dating marketplace is currently skewed with an uneven men/women ratio.

Sometimes the goal will be to get a specific audience, for example, product research might show a certain demographic tends to become power-users or high LTV users.

Here’s another example: An event app might be focusing on “suburban mothers”, as it’s discovered they are significantly more likely to organize events, and their events on average get distributed to more guests.

In the world of mobile games, this strategy will usually translate to audiences that are more likely to make in-app purchases, as well as show high retention and engagement with the app.

B. Low hanging fruits

In very simple terms, it’s extremely important to analyze your app store connect and google developer console data to see your specific mix of impressions and installs. Doing some “basic” reverse engineering helps you understand how you’ve been attracting and converting users thus far.

Maybe you’re getting a lot of search traffic for non-branded terms but not converting that traffic very well. Or perhaps you recently climbed the charts and you can leverage the increased exposure to “Browse/Explore” traffic in the app stores and optimize for that in order to broaden the audience to improve your growth.

By analyzing your paid traffic performance you can also identify the most important channels driving your growth, and discover audiences worth optimizing for that are coming in from these sources.

How do you create a test traffic campaign that mimics that audience?

After defining the audiences you currently want to optimize for, it’s time to create a test traffic campaign.

Channel

The channel you should use has to fit the channel you want to optimize for – with one caveat: Facebook, with its advanced targeting capabilities, can allow you to target a certain audience profile with a lot of flexibility so you can “mimic” the audience of your choice.

Based on our research, Facebook can act as a great proxy for organic traffic. It’s the source that better emulates a browsing/searching mindset, as Facebook users are in more of an explorative mode than users viewing an ad while playing another game (in-app ad network traffic). Facebook also allows you to get very granular when building audiences.

Given that your app store test is accessible for users through a single link, you can place that link as the destination for most types of ads in all mobile ad channels. These are the most popular channels used in testing:

- Snapchat

- Tiktok

- Various ad networks such as Vungle, Applovin, Unity, etc.

- Native web mobile for apps where web traffic coming in from their main mobile website is significant.

Audience

Using the targeting capabilities in your channel of choice (Facebook or not) you can create an audience that serves as a great proxy for the audience you want to optimize for:

- Mimicking organic traffic – if you want to optimize for Browse/Explore traffic in the App Store or Google Play, you can use very broad targeting that mimics that audience. The audience that ends up browsing the app store doesn’t necessarily have an interest in your genre of app/game, know your brand, or is even looking for a product like yours right now. Read more about testing for organic installs here.

- If you want to optimize for App Store branded search, you can target users that are aware or even fans of your brands to test.

- For paid traffic, creating test traffic is more straightforward as you can use the same channel and the same targeting you use on the campaigns you want to optimize for.

A word about Sample Size and how much traffic you need to reach a statistically significant result

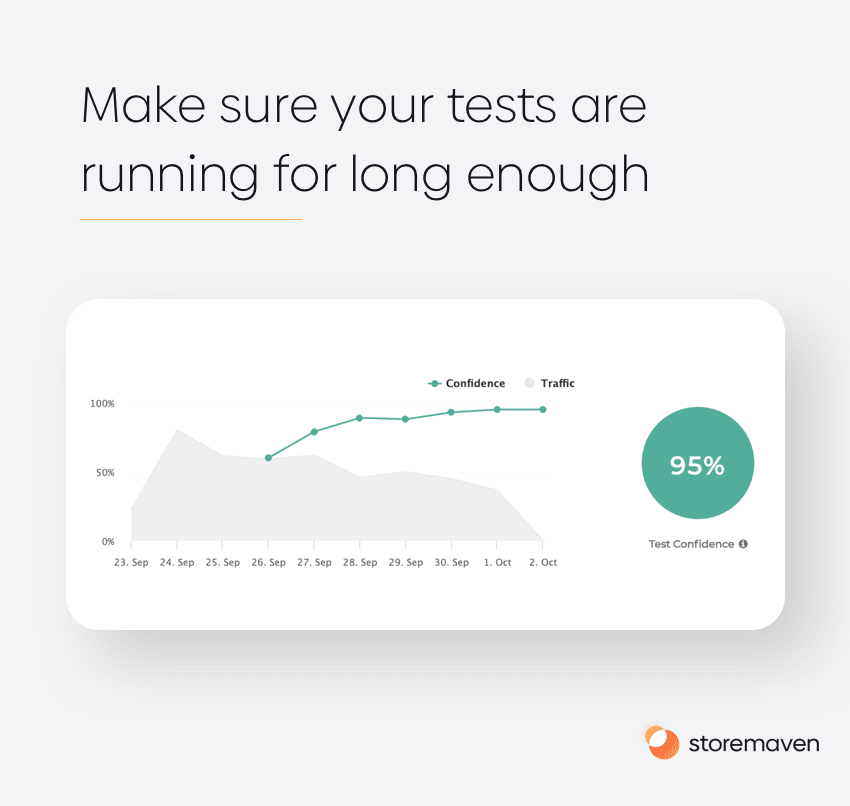

This is a crucial area where many app store tests fail. Concluding a test based on a sample size that isn’t sufficient in order to reach a statistically significant result, is the enemy of many mobile marketers (and also marketing folks outside of mobile).

The most common mistake marketers perform is that they run a test, check the results each day, and when they see a result they like, they stop and turn off the test campaign, using the winning variant at the time.

This method could lead to disastrous results (read, 20% drop in conversion rates in the real store). This is because instead of really testing hypotheses, these marketers use the test as a vehicle to confirm whatever it is they want to be true, they simply wait until results look like what they want and stop the test, with no regard for the actual scientific conclusion.

Other mistakes stem from miscalculating the sample size required to perform a statistical hypothesis test, and using a sample size that is simply too low to really reach any conclusion.

These mistakes are double-edged swords. Not only have you wasted a ton of time (hypothesizing, developing test creatives, etc.) and a test budget, you also implemented a winning app store page variation as your production app store page that you have no scientific basis to even assume will perform better.

The solution is to use a Bayesian-based model. Storemaven has developed its own algorithm for performing such tests dubbed StoreIQ. In short, the Bayesian method simply allows you to drive traffic to the test and get back the winning probabilities of each app store page variation over time, by running tens of thousands of simulations based on actual, cumulative test results each day. This has two advantages:

- Once a certain variation has a very low probability of winning the test, StoreIQ will stop sending test traffic to that variation, saving you up to 50% of the test traffic cost you would have spent if you used a different testing methodology.

- Once you have a test winner, StoreIQ will inform you automatically that you sent a sufficient sample size and you can stop the test.

You don’t need to worry about calculating sample sizes, or closing the test too early. With StoreIQ you always get a statistically significant result you can trust for the minimum amount of traffic possible.

Although traffic requirements vary by conversion rate, you usually need 200-250 “installs” per variation in order to conclude the test.

If you’re considering a testing platform claiming to provide you a result with fewer installs, sometimes even arguing you’ll get accurate results with 50 installs per variation, beware. It’s likely that the result you get will be no better than a coin toss, so why run a test in the first place?

Don’t forget to test multiple audiences

You may know exactly which audience segment currently responds best to your app or game. But that doesn’t mean you should stop testing other audiences. The mobile app industry is constantly changing, as are user preferences. You need to stay on top of these changes.

We suggest testing multiple audience segments so that your data is always up to date and you’re never missing out on lucrative opportunities.

With Storemaven you can see your test performance segmented by the audience to learn whether different audiences had different preferences and different creatives and messaging that is more likely to convert them to installs.

A framework for traffic planning

These are the different factors you need to take into consideration when planning the traffic for your next test:

1. The Ad. The ad should be as generic as possible so that there will be no bias towards any of the variations. Using Ad Creatives that are similar to what your current app store looks like will create a bias towards control.

2. Daily budget – It’s important to set up a daily budget for the tests and not an overall budget (in order to spread the traffic evenly while the test is running).

3. Test length – This is a question we get often: how long should you test for? There’s a plain answer here – keep the test running for at least seven days. You want to make sure it runs for enough days to have a clear trend and not to be biased by days of the week. So always aim for a minimum of seven days of traffic.

4. Budget – Calculating the testing budget is crucial to have statistically valid results. It also plays an important role when planning your testing roadmap for the future. Once you know the number of variants on your test, we can estimate its cost either by using your CPIs or your CPC/CPM.

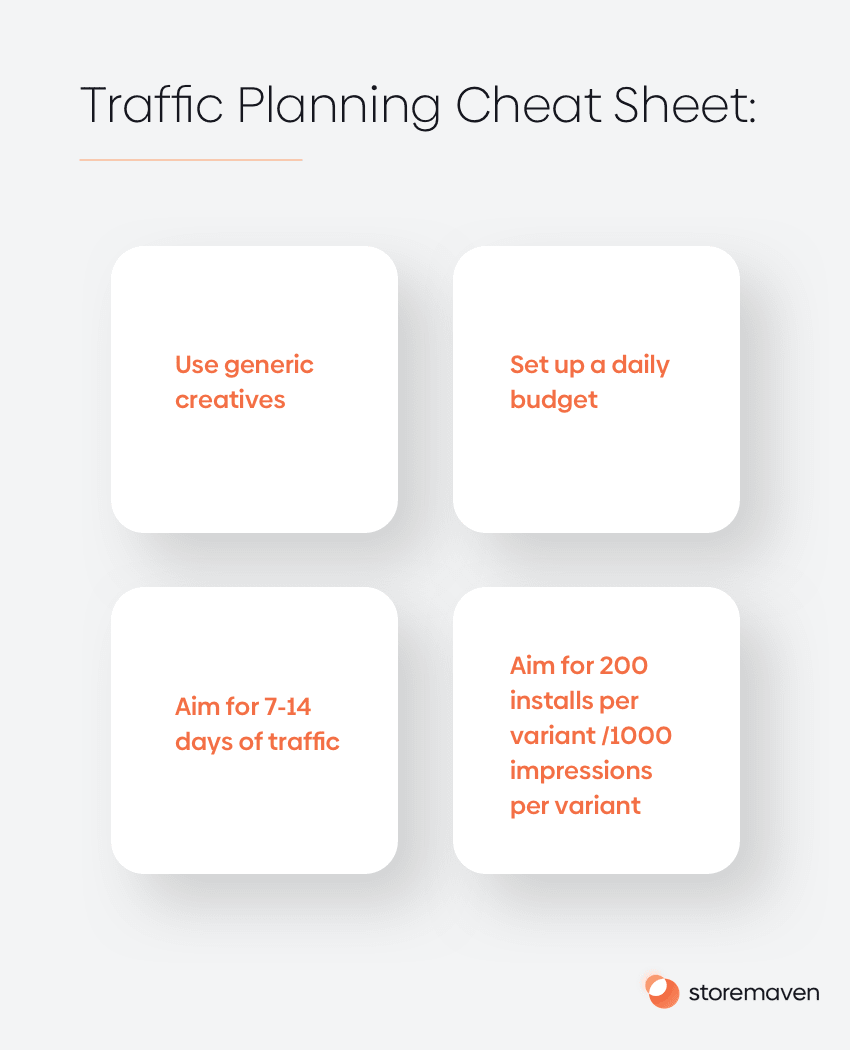

So here’s your cheat sheet:

Use generic creatives.

Set up a daily budget.

Aim for 7-14 days of traffic.

Aim for 200 installs per variant/1000 impressions per variant.

What’s Next?

Once all this sinks in (well done for getting to the end!), you’re ready to drive quality traffic to your tests and after that, there’s only one thing left to do: analyze your results and implement the best-performing creatives. To get all the details on this final step of the app testing cycle, check out part four in the series – coming soon.